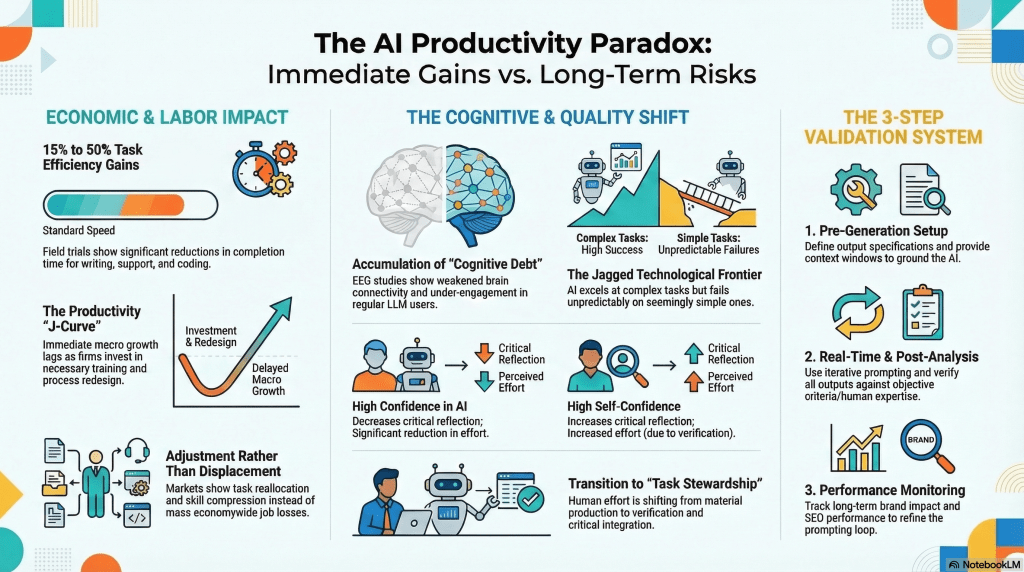

AI tools are delivering real efficiency wins, but they’re also quietly reshaping how workers think, what skills atrophy, and where quality unexpectedly breaks down. Here’s what every business leader needs to understand before going all-in.

The AI Productivity Paradox: a framework for understanding short-term efficiency gains alongside emerging cognitive and organizational risks.

There’s a quiet tension building inside AI-adopting organizations. On one side: real, measurable productivity gains that no serious executive should dismiss. On the other: a set of slower-moving, harder-to-see risks that, left unmanaged, could erode the very capabilities organizations are counting on AI to amplify.

This tension is what researchers and strategists are calling the AI Productivity Paradox and it plays out across three interconnected domains: economic and labor dynamics, cognitive and quality shifts, and the governance frameworks organizations need to navigate both.

The Economic Picture: Real Gains, but Not Instant

Field trials across writing, customer support, and software development consistently show reductions in task completion time of 15% to 50% compared to standard workflows. That’s not marginal, or organizations handling high volumes of routine knowledge work, the compounding effect is substantial.

But those gains don’t show up immediately on the macro balance sheet. The Productivity J-Curve explains why: in the short term, organizations must absorb the costs of training, workflow redesign, and integration before realizing broader economic returns. Leaders who expect instant ROI are often disappointed, and sometimes abandon AI initiatives right before the curve bends upward.

15–50%

Task Efficiency Gains

Observed across writing, support, and coding workflows in field trials.

J-Curve

Delayed Macro Growth

Short-term investment dip precedes longer-term productivity payoff.

Realloc.

Not Mass Displacement

Labor markets show skill compression and task reallocation, not widespread job loss.

The labor story is similarly nuanced. Rather than triggering the mass displacement many feared, current market data points to task reallocation and skill compression, workers shifting away from routine production tasks and toward higher-order judgment, verification, and integration work. The jobs aren’t disappearing; they’re changing shape.

The Cognitive Risks Nobody Is Talking About Enough

The second domain is where the paradox gets genuinely uncomfortable. Even as AI accelerates output, it may be slowly degrading the underlying human capabilities organizations depend on.

“EEG studies are detecting weakened brain connectivity and reduced cognitive engagement in regular LLM users, a phenomenon researchers are calling ‘cognitive debt.'”

The mechanism is straightforward: when AI handles the heavy cognitive lifting, such as drafting, reasoning, and synthesis, users engage less deeply with the material. Over time, the neural pathways for critical analysis and creative problem-solving get less exercise. This isn’t theoretical. It’s showing up in neurological data.

There’s also a troubling dynamic around confidence. Research shows that high confidence in AI output actually reduces critical reflection; users who trust the tool most are the ones who check it least. Paradoxically, workers with stronger domain expertise and higher self-confidence engage more critically with AI outputs, applying greater scrutiny and effort to verification. The implication: organizations may want to invest in building genuine expertise rather than assuming AI can substitute for it.

The Jagged Frontier: Where AI Succeeds and Where It Fails

One of the most practically important insights for teams deploying AI is the Jagged Technological Frontier, as researchers call it. AI doesn’t fail gradually or predictably; it excels at surprisingly complex tasks, then fails unpredictably on seemingly simple ones.

A system that can draft a sophisticated legal brief may stumble on a straightforward date calculation. A coding assistant that generates elegant architecture may introduce subtle bugs in basic conditional logic. This irregularity makes AI harder to supervise than traditional software, because failure modes don’t follow intuitive patterns. Effective oversight requires humans who understand both the domain and the tool’s specific failure landscape.

Key Terms: A Working Glossary

Glossary of Key Concepts

Cognitive Debt: The gradual erosion of critical thinking and analytical capability that occurs when workers habitually offload complex reasoning to AI. Identified through EEG studies showing reduced brain connectivity in regular LLM users.

The Productivity J-Curve: The pattern where AI adoption initially appears to slow macro productivity growth due to training, integration, and redesign costs before generating compounding returns as workflows mature.

The Jagged Technological Frontier: The uneven capability profile of AI systems, which perform exceptionally well on some complex tasks while failing unpredictably on seemingly simpler ones. Makes AI harder to supervise than traditional tools.

Task Stewardship: The emerging human role in AI-augmented workflows: shifting from direct material production to critical verification, quality integration, and strategic oversight of AI-generated outputs.

Skill Compression: The narrowing of human skill sets observed as AI absorbs routine tasks. Workers increasingly perform a smaller range of higher-level functions, with implications for long-term workforce capability and adaptability.

LLM (Large Language Model): The class of AI systems underlying tools like ChatGPT, Claude, and Gemini. Trained on vast text datasets to generate, analyze, and transform language, the engine powering most current enterprise AI productivity tools.

Pre-Generation Setup: The first step in the 3-Step Validation System: defining output specifications and providing sufficient context before prompting AI, to reduce hallucinations and anchor outputs to accurate information.

Context Window: The amount of text an AI model can “see” and process at once. Providing rich context within this window, such as background documents, specifications, and examples, directly improves output quality and reduces error rates.

A Framework for Sustainable AI Use

The infographic’s 3-Step Validation System offers a practical governance structure that addresses both the quality risks and the cognitive risks simultaneously:

Step 1: Pre-Generation Setup

Define output specifications clearly and load the AI’s context window with grounding information before generating anything. This step dramatically reduces hallucinations and misalignments, and it requires the human to engage meaningfully with the task requirements, counteracting cognitive disengagement.

Step 2: Real-Time & Post-Analysis

Use iterative prompting rather than accepting first outputs, and verify all deliverables against objective criteria or domain expertise. This is where task stewardship happens in practice, and where critical reflection must be deliberately preserved against the pull of over-reliance.

Step 3: Performance Monitoring

Track downstream outcomes, brand impact, SEO performance, error rates, and customer responses to close the feedback loop and continuously refine prompting and verification processes. Organizations that treat AI outputs as the end of the workflow, rather than an input to be refined and measured, will accumulate quality debt they won’t see until it’s costly.

“The organizations that will win with AI aren’t those who use it most; they’re those who’ve built the governance, expertise, and culture to use it best.”

The AI Productivity Paradox isn’t an argument against adopting AI tools. The efficiency gains are real, and the competitive pressure to act is legitimate. It’s an argument for how to adopt them: with clear-eyed awareness of the cognitive and quality risks, deliberate governance frameworks, and sustained investment in the human expertise that makes AI outputs actually valuable.

Organizations that manage this balance well will compound both the AI gains and their human capital. Those who don’t will find themselves more efficient at the surface while quietly hollowing out the judgment capabilities they need for anything genuinely difficult.